Your test suite turned red overnight. Nobody pushed a breaking change. Nobody touched the test files. A developer renamed a CSS class from submit-button to primary-cta, and 47 tests collapsed.

This is the most common story in test automation. Not bugs in the product. Bugs in the tests themselves. And teams burn an absurd amount of time dealing with it.

60-80% of total automation effort goes to maintenance. Not writing new tests. Not catching regressions. Just keeping existing tests alive while the UI evolves underneath them. Google found that 16% of their tests are flaky, costing over 2% of total engineering time. For a 50-developer team, that's roughly one full person-year wasted annually on tests that break for no good reason.

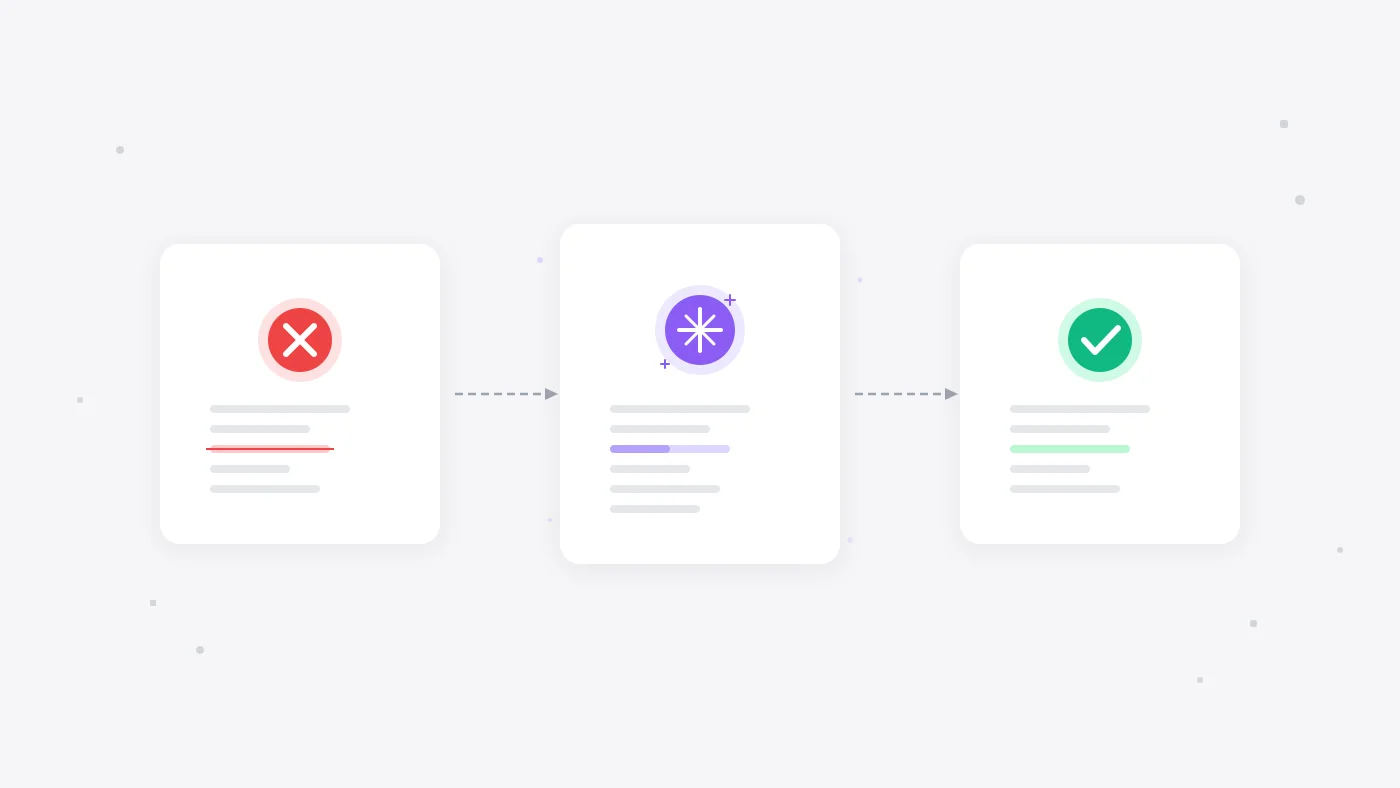

Self-healing tests are the fix. They detect when something changed, figure out what the element actually is now, and keep running. No manual intervention. No 6am Slack messages about the pipeline being red again.

But there's a lot of noise around this topic. Some of it is real, some of it is vendor marketing. Let's break down what actually works.

Why tests break (it's not just selectors)

The common assumption is that self-healing is about fixing broken CSS selectors. That's part of the story, but only part of it.

QA Wolf published research showing that only 28% of test failures come from selector changes. The rest break down like this:

- Timing issues. The page hasn't finished loading, an animation is still running, or an API call takes longer than expected. The test clicks before the button exists.

- Runtime errors. The application itself threw a 500, a third-party script failed, or a feature flag changed behavior. The test was fine. The app wasn't.

- Test data problems. The test user got deleted, the product went out of stock, or the database was in an unexpected state. Nothing in the UI changed at all.

- Visual and layout changes. The element is technically there, but it moved behind a modal, scrolled off screen, or got covered by a cookie banner.

- Interaction changes. A form that used to be one page is now a multi-step wizard. The login flow added a CAPTCHA. The checkout process has a new confirmation step.

Fixing only the selector problem means you are solving 28% of the issue and calling it a day. Real self-healing needs to handle all of these.

How self-healing actually works

There are a few different approaches, and they sit on a spectrum from simple to sophisticated.

Multi-locator fallback chains. The most basic form. When the primary selector fails, the system tries alternatives: ID, CSS, XPath, ARIA label, text content, data-testid. Tools like Healenium (open-source) store the full DOM tree path and element attributes in a database. When a NoSuchElementException fires, Healenium retrieves the stored DOM, compares it to the current page using a weighted similarity algorithm, and generates ranked candidate locators. It's fast, deterministic, and handles simple selector renames well. But it falls apart when the page structure changes significantly.

Multi-attribute fingerprinting. Instead of relying on one locator, the system builds a rich profile of each element: its ID, visible text, ARIA role, position on screen, parent element, and surrounding context. When one attribute changes, the others provide enough signal to re-identify the element. Virtuoso reports 95% healing accuracy with this approach. Better than fallback chains, but still fundamentally tied to the DOM.

AI/ML-based element finding. Machine learning models trained on historical test runs learn patterns about how elements change over time. When a failure occurs, the model predicts the most likely updated element based on the full page context. This handles structural changes that rule-based approaches miss.

LLM-powered semantic matching. The 2026 evolution. When classical approaches fail, a large language model analyzes the page source, accessibility tree, and screenshots to understand what an element means rather than what it looks like in code. Katalon Studio 11 shipped this in January 2026 as a second-tier healing mechanism behind their classical matcher. It's slower but catches cases that pattern matching can't.

Intent-based architecture. This is the approach that sidesteps the problem entirely. Instead of writing tests that target specific selectors, you describe what the test should do in plain language: "add the first product to the cart and go to checkout." The system interprets the intent at runtime. There's nothing brittle to heal because the test never locked onto a specific element in the first place.

What the numbers actually show

The marketing claims around self-healing vary wildly. Here's what holds up to scrutiny:

- mabl reports up to 95% reduction in test maintenance for teams using their auto-healing features. This is the high end and comes from teams with relatively stable UIs.

- Capgemini's World Quality Report found that AI-based testing tools reduced maintenance effort by up to 70% and improved CI/CD pipeline stability by nearly 50%.

- Teams using self-healing report saving 15-20 hours per sprint on selector maintenance alone.

- The broader stat is that AI-assisted testing delivers a 40% improvement in testing efficiency across the board, factoring in test creation, execution, and maintenance.

The honest version: if your tests break primarily because of selector changes, self-healing tools will cut your maintenance dramatically. If your tests break because of timing, data, or application issues, self-healing helps but won't eliminate the problem. You still need good test architecture.

Where intent-based testing changes the game

The most interesting shift in 2026 isn't better self-healing. It's tests that never need healing in the first place.

Traditional test automation encodes implementation details. Click element #checkout-btn. Fill input [name="email"]. Assert that .success-message is visible. Every one of those selectors is a liability. Every UI change is a potential break.

Intent-based testing flips this. You describe what should happen, not how to interact with the DOM. The AI figures out the "how" at runtime, every time. If the checkout button moves, changes its text, or gets wrapped in a new component, the test still works because it was never looking for a specific element. It was looking for the way to check out.

This isn't theoretical. testRigor and Momentic have been shipping this approach for a while. The tradeoff is speed (interpreting intent is slower than clicking a known selector) and occasional ambiguity (what if there are two things that look like checkout buttons?). But for teams drowning in maintenance, the tradeoff is worth it.

What we think about this at Test-Lab

We designed Test-Lab around this idea from the start.

You write test plans in plain English. Our AI agent opens a real browser, reads the page, and figures out how to execute each step. There are no selectors in your test plans. No XPath. No element IDs. When a developer redesigns the checkout flow, renames every CSS class, or swaps the entire component library, your tests keep working because they describe what to verify, not which DOM node to click.

When something genuinely breaks, like the checkout button being removed entirely, you get screenshots, step-by-step logs, and a clear explanation. That's a real regression, and you want to know about it. The difference is you stop getting woken up by false alarms from a renamed class.

This is the same principle behind self-healing, taken to its logical conclusion. Instead of building increasingly clever systems to fix broken selectors after the fact, skip the selectors entirely.

What to look for if you are evaluating tools

Not all self-healing is created equal. A few things worth checking:

- What percentage of failures does it actually heal? Ask for data broken down by failure type, not just the headline number. If they only heal selector issues, that's 28% of the problem.

- Does it heal silently or with transparency? You want to know when healing happened. Silent healing can mask real regressions.

- Does it handle flow-level changes? Element-level healing is table stakes. Can it adapt when a form goes from one page to three pages?

- What is the performance cost? LLM-based healing adds latency. For a nightly suite that's fine. For tests blocking a deploy, it might not be.

- Does it require selector-based tests at all? If your tool still needs you to write selectors and then heals them when they break, you are treating the symptom. Intent-based tools treat the cause.

The bottom line

Test maintenance is the silent killer of automation programs. Teams start with ambition, build a suite of 500 tests, and then spend the next year keeping them alive instead of expanding coverage. Self-healing helps, and the best implementations genuinely cut maintenance by 70-95%.

But the bigger opportunity is not healing broken tests. It's writing tests that can't break in the first place. If your test never references a selector, a selector change can never break it. That's the direction the industry is heading, and it's where the real time savings live.

Stop fixing broken selectors. Try Test-Lab - write tests in plain English, and let AI handle the UI changes for you.