AI coding agents can generate a React component in seconds. Ask Cursor, Claude Code, or Codex to build a signup form and you'll have working code before you finish your coffee.

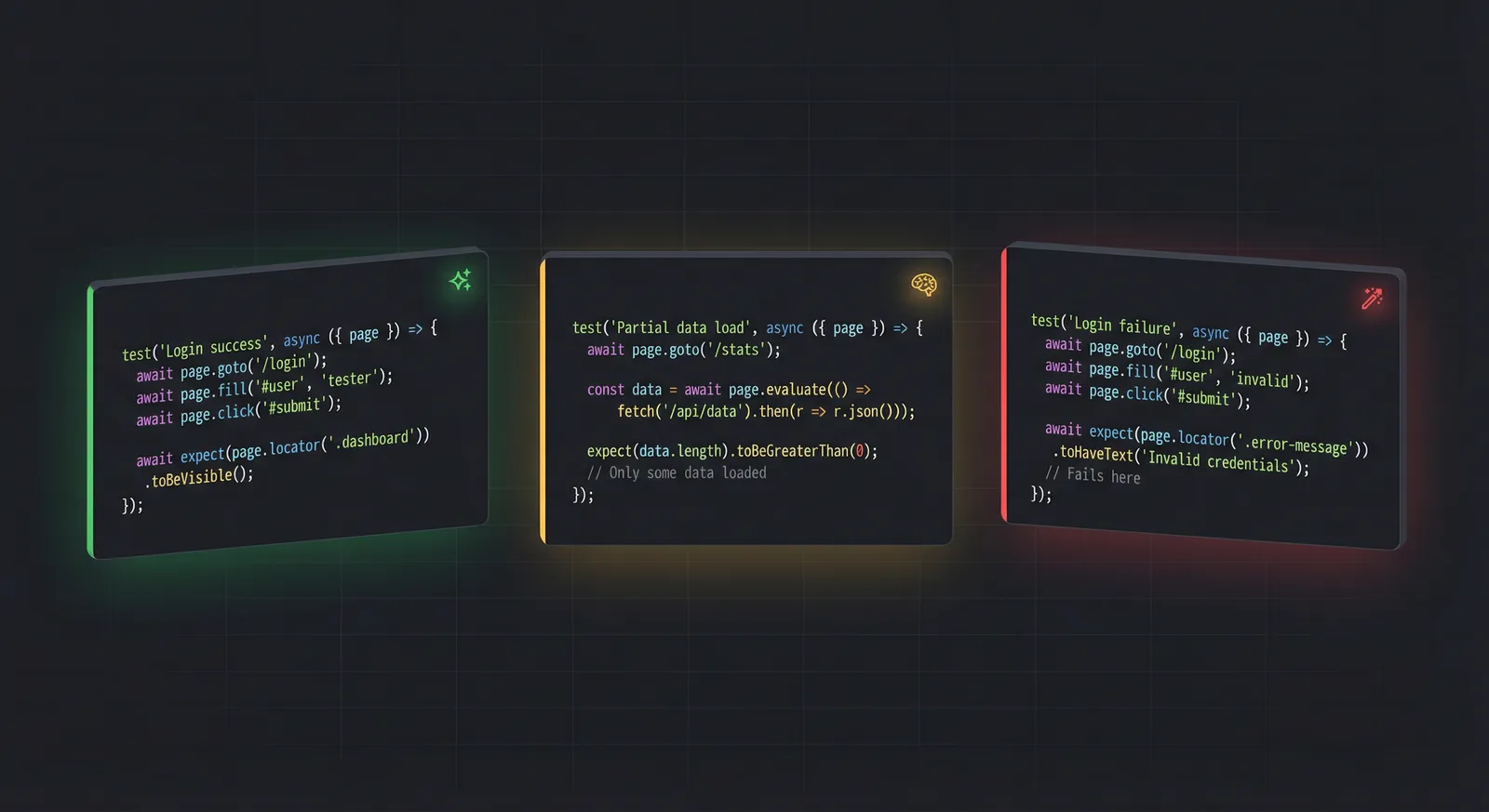

Now ask them to write a 30-step E2E test that logs into your app, navigates a dashboard, fills out a multi-page form, handles a loading spinner, and verifies the confirmation page.

That's where things get interesting.

E2E testing is not a coding task

Most "Cursor vs Claude Code vs Codex" comparisons focus on code generation - write a function, refactor a module, fix a bug. In that world, the models are surprisingly close. SWE-bench Verified scores put Opus 4.6 at 80.8%, Gemini 3 Pro at 80.6%, and Sonnet 4.6 at 79.6%. A few percentage points separate them.

But E2E browser testing is a fundamentally different problem.

Code generation is a sprint. You give the model a prompt, it produces output, done. E2E testing is a marathon. The model has to open a browser, interpret page state, decide what to click, handle unexpected DOM changes, recover when something fails, and maintain coherent context across dozens of steps.

It's not writing code. It's operating a browser like a human QA tester would. And that difference changes which models actually work.

The three approaches

There are three main ways people use AI agents for E2E testing right now.

Cursor agent mode. The AI runs inside your IDE with full access to your codebase. It can use Playwright MCP or CLI to drive a browser, see accessibility snapshots, and write or execute test scripts. You pick which model powers the agent - Opus, Sonnet, Codex, Gemini, whatever your setup supports.

Claude Code. Anthropic's terminal-native agent. It reads your project, installs dependencies, runs Playwright, and handles the entire test flow from the command line. It runs on Opus 4.6 or Sonnet 4.6, with a 200K context window (1M in beta).

Codex. OpenAI's coding agent, available as a macOS app or Cursor extension. Powered by GPT-5.3, strong at terminal commands and scaffolding. Context window of 400K tokens with fast inference.

All three can technically drive Playwright. The question is how well they do it when the test gets complex.

What makes E2E testing hard for AI

Writing expect(button).toBeVisible() is easy. The hard part is everything that comes before and after that line.

Long-horizon planning. A complex E2E test might involve 30, 50, or 100 discrete browser actions. The model needs to plan ahead - not just "click this button" but "click this button, wait for the modal, fill the form, submit, verify the redirect, then check the database state." Losing track of the overall goal at step 22 means the test fails.

DOM awareness. The model reads accessibility snapshots - structured representations of what's on the page. It needs to figure out which element is the right one to click, which input to fill, what's a loading spinner versus actual content. On complex pages with nested components and dynamic content, this is genuinely difficult.

Failure recovery. A click lands on the wrong element because a toast notification appeared. A page takes 3 seconds to load instead of the expected instant response. An element exists but is covered by an overlay. The model needs to recognize what went wrong and try a different approach - not just retry the same failed action in a loop.

Context management. Every browser interaction adds to the conversation history. Snapshots, action results, error messages. Over a long test session, the context window fills up. Models that lose track of earlier steps start making contradictory decisions.

Patience. Some pages need time to load. Some animations need to complete. Some elements appear after async data fetches. A model that rushes ahead gets stale element references and fails. A model that waits too long wastes tokens and time.

Benchmarks like SWE-bench measure none of this. They measure isolated bug fixes, not sustained multi-step browser sessions.

The model tier reality

After spending considerable time running various models against real E2E test scenarios, a clear pattern emerges. Models don't scale linearly from cheap to good. They fall into distinct tiers, and the gaps between tiers are massive.

Frontier models: they actually finish

Claude Opus 4.6 and GPT-5.3 with extended thinking are the only models that reliably complete complex E2E test flows.

They handle multi-page navigation without losing track of the test objective. When a step fails - wrong element, unexpected popup, slow page load - they diagnose the issue and try a different approach instead of looping. They maintain coherent context across long sessions.

The downside is cost. Opus 4.6 runs at $5/$25 per million tokens (input/output). A complex E2E test session can easily consume millions of tokens between snapshots, reasoning, and action outputs. Sessions for complex tests can run $20-30.

But here's the thing - the test gets written. You get a working, runnable E2E test at the end.

Mid-tier models: fine for simple tests

Codex 5.3 and Sonnet 4.6 can handle straightforward E2E scenarios. Single-page tests, basic form submissions, simple navigation flows - these work.

Codex 5.3 is interesting because it dominates Terminal-Bench 2.0 at 77.3% versus Opus 4.6's 65.4%. It's genuinely better at terminal automation. But E2E testing through Playwright isn't really a terminal task - it's an agent reasoning task that happens to use terminal commands. The skill that makes Codex fast at running shell commands doesn't help when the model needs to interpret a complex DOM snapshot and decide which of 50 elements to interact with.

Sonnet 4.6 scores 79.6% on SWE-bench - barely behind Opus. And developers prefer it over Sonnet 4.5 70% of the time. For code generation, it's excellent. But give it a 30-step browser automation flow and it starts struggling around step 10-15. Context accumulation degrades its decision-making, and its recovery from failures is noticeably less sophisticated than Opus.

Both models are significantly cheaper - Codex at $2/$10 and Sonnet at $3/$15 per million tokens. For simple test cases, the savings are real and the quality is acceptable. For complex flows, you save on token cost but the test doesn't get completed.

Budget models: not ready for this

Smaller models and budget tiers - Gemini 3 Pro in lower configurations, Flash models, and similar - generally can't complete complex E2E tests at all.

They'll start fine. Open the browser, navigate to the page, maybe click a few elements correctly. But once the test requires sustained attention - handling an auth flow, navigating through multiple pages, recovering from an unexpected modal - they fall apart.

The tokens spent before failure aren't refunded. A model that fails at step 15 of a 30-step test still cost you for those 15 steps. Often the total cost of a failed session isn't much less than a successful session with a better model.

The counterintuitive cost math

On paper, the pricing gap between model tiers is huge:

| Model | Input / Output per M tokens |

|---|---|

| Opus 4.6 | $5 / $25 |

| Sonnet 4.6 | $3 / $15 |

| Codex 5.3 | $2 / $10 |

| Gemini 3 Pro | $1.25 / $10 |

That makes it look like Gemini is 4x cheaper than Opus. And for code generation tasks that all models can complete, it is.

But for complex E2E tests, the math flips. If a cheap model fails 80% of the time and a frontier model succeeds 90% of the time, the cost per completed test favors the expensive model. You're not paying for tokens - you're paying for a working test.

This doesn't apply to simple tests. If your E2E tests are straightforward - load a page, click a button, check a result - use mid-tier models and save money. The problem is specifically with complex, multi-step flows where cheaper models can't sustain the required reasoning.

Practical recommendations

Match the model to the test complexity.

For smoke tests and basic sanity checks (5-10 steps, single page, no complex state), mid-tier models work fine. Codex 5.3 or Sonnet 4.6 in Cursor will handle these without issues.

For complex user journeys (auth flows, multi-page wizards, dynamic content, form validation across many fields), use frontier models. Opus 4.6 through Cursor or Claude Code is currently the most reliable path.

Use Playwright CLI, not MCP.

We covered this in detail in our Playwright MCP vs CLI comparison. The short version: CLI saves browser state to disk instead of dumping it into context, using 4-10x fewer tokens for the same automation tasks. When you're already spending $20+ per complex test session, that efficiency matters.

Set up good project rules.

Whatever model you use, .cursorrules or equivalent project configuration files make a meaningful difference. Define your testing conventions, selector strategies, assertion patterns, and file structure. This gives the model a framework to operate within instead of making up its own conventions on every run.

Consider purpose-built testing platforms.

If you're running E2E tests regularly - not just writing them once - the model management overhead adds up fast. Choosing the right model, optimizing context windows, handling failures, managing Playwright sessions. Purpose-built AI testing platforms handle all of this automatically, so you focus on describing what to test rather than babysitting the AI while it tries to test.

The bigger picture

41% of all code is now AI-generated. That code needs testing. The irony is that the same AI models that generate code with a ~45% security vulnerability rate are the ones people expect to also test it.

E2E testing is where coding agents hit their ceiling - it's an agent task, not a generation task. The models that close the gap on coding benchmarks haven't closed it on sustained multi-step browser automation. That gap is where purpose-built testing tools earn their value.

The best AI coding agent is not the best AI tester. At least not yet.

Skip the model management. Test-Lab runs E2E tests with AI agents that handle context management, failure recovery, and evidence capture automatically. Describe what to test, get results.