The build is red. Nobody pushed a breaking change. You check the logs. It's the same test that failed yesterday, passed this morning, and is now failing again for no apparent reason.

You re-run the pipeline. It goes green. Everyone moves on.

This happens so often that your team has a name for it. "Just re-run it." Three words that cost your organization more than you think.

How bad is the flaky test problem?

Worse than most teams realize, because the cost is spread thin across every engineer, every day.

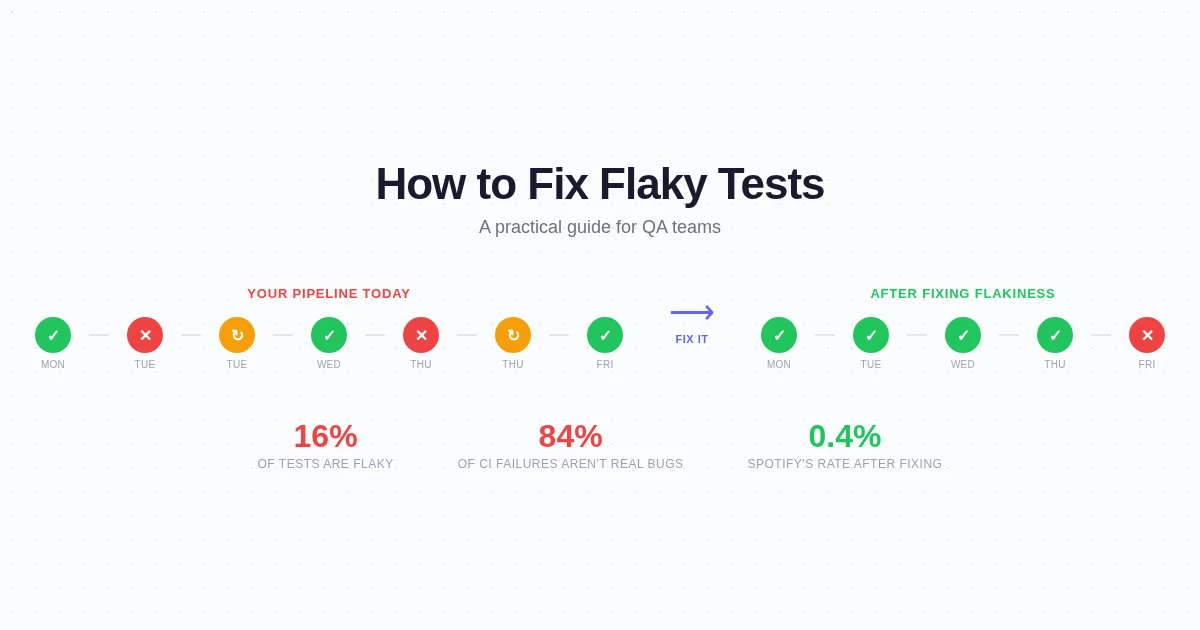

Google found that 16% of their test inventory exhibits flaky behavior. That's not 16% of tests failing on a given run. It's 16% of all tests that will, at some point, produce a false result. At Google's scale, this costs roughly $120,000 per year for a 50-person team in wasted investigation time alone.

And it's getting worse. Bitrise analyzed over 10 million mobile builds and found that 26% of teams experienced test flakiness in 2025, up from 10% in 2022. The problem is growing faster than teams are solving it.

The real damage isn't the wasted time though. It's the trust erosion. When tests cry wolf often enough, developers stop listening. 84% of post-submit test failures at Google turned out to be flaky tests, not real regressions. At that ratio, can you blame anyone for hitting "re-run" instead of investigating?

That's the trap. Flaky tests train your team to ignore failures. And then when a real bug makes the build red, it gets ignored too.

Why tests flake (it's not random)

Flaky tests feel random. They're not. Every flaky test has a deterministic root cause that's just hard to reproduce consistently. The causes fall into a few buckets, and knowing which bucket you're dealing with determines the fix.

Timing issues (about 45% of flaky failures). This is the big one. Your test clicks a button before the page finishes loading. It reads a value before an API response arrives. It asserts on an element that's mid-animation. The test and the application are racing each other, and sometimes the test loses.

The classic symptom: the test passes locally (your machine is fast) and fails in CI (the container is slower). Or it passes when run alone and fails when run in parallel with other tests competing for resources.

Shared state and test isolation failures (about 20%). Test A writes to a database. Test B reads from the same database and expects a clean state. When they run in the right order, everything's fine. Shuffle the order, and Test B breaks. Same thing happens with cookies, localStorage, and in-memory caches that leak between test runs.

Test order dependencies (about 12%). A subtle variant of shared state. Test B only passes because Test A set up some precondition. Run Test B first, and it fails. This is especially common in E2E suites where login state carries over between tests by accident.

Environmental differences (about 23%). The test was written on a Mac with Chrome 126. CI runs on Linux with Chrome 125. The font renders one pixel differently. The animation takes 50ms longer. The date format changes because the timezone is different. A Docker container under memory pressure handles JavaScript garbage collection differently than your 32GB development machine. None of these are bugs in your product. All of them can make a test fail.

The frustrating part is that most flaky tests fall into just the first two categories. Timing and shared state account for roughly two-thirds of all flakiness. Fix those two, and the rest becomes manageable.

The fixes that actually work

Let's go through the playbook, starting with the quick wins and moving to the structural fixes.

Stop using sleep()

This is low-hanging fruit that still trips up experienced teams. Any test that has await sleep(2000) or Thread.sleep(3000) in it is a flaky test waiting to happen. Sometimes 2 seconds is enough. Sometimes it isn't. You're gambling on timing instead of testing behavior.

Replace arbitrary sleeps with explicit waits. Wait for a specific condition: the element is visible, the API response arrived, the loading spinner disappeared. Every modern testing framework supports this. Playwright has waitForSelector and auto-waiting built in. Cypress has cy.get() with built-in retry. There's no excuse for sleep() in a test suite in 2026.

This single change fixes a surprising number of flaky tests. A team at Semaphore reported that replacing hardcoded waits with explicit conditions eliminated roughly 30% of their flaky test volume without changing anything else.

Isolate your tests properly

Every test should start from a known state and clean up after itself. In practice, that means:

Reset cookies and localStorage between tests. Don't rely on a previous test having logged in. Don't assume the database is in any particular state. Use test fixtures or factories to create exactly the data you need, then tear it down when the test finishes.

If you're running tests in parallel (and you should be, for speed), make sure they don't share resources. Separate database schemas per test worker, or use transactions that roll back. The goal is that any test can run in any order, at any time, alone or alongside others, and produce the same result.

Quarantine, don't delete

The worst response to a flaky test is deleting it. That test was covering something. The second worst response is leaving it in the critical path where it blocks deploys with false failures.

Spotify built a system for this that's worth copying. They move flaky tests into a quarantine suite that runs separately from the merge-blocking pipeline. The tests still execute, failures still get tracked, but they can't block deployments. Each quarantined test gets an assigned owner with a deadline for fixing it.

The emphasis on ownership matters. "The team will fix it" means nobody fixes it. A specific person with a date attached actually gets results. Spotify reduced their flaky test rate from 4.5% to 0.4% in three months using this approach.

Retries are a band-aid, not a fix

Most CI systems support automatic retries. A test fails, it re-runs, it passes on the second attempt, the build goes green. Problem solved?

No. Problem hidden.

Retries are useful for actual transient failures: a network blip, a container that was slow to start, a third-party API that timed out. But if a test needs retries to pass reliably, it's flaky. The retry is masking the root cause instead of fixing it.

The worst version of this is silent retries where the second attempt passes and nobody ever sees that the first attempt failed. Your CI dashboard shows green. Your flaky test count looks like zero. Meanwhile, you're running every test twice and the underlying timing bug is still there, waiting to bite you when the retry happens to also fail.

If you use retries, log them. Track which tests retry most often. That's your hit list for actual fixes.

Build a flakiness dashboard

You can't fix what you can't see. The most effective thing Spotify did before any code changes was simply making flakiness visible. They built a table showing which tests flaked, how often, and when. Just the visibility alone dropped their flaky rate from 6% to 4% in two months, before they wrote a single fix.

Developers started fixing their own flaky tests once they could see them. Peer pressure and personal pride did more than any process mandate.

Track these metrics for each test: pass rate over the last 30 runs, number of retries needed, time since last flaky failure, and who owns it. Publish the dashboard where the team can see it. The rest tends to follow.

The deeper problem with selector-based testing

All the fixes above assume your tests are fundamentally sound and just need debugging. But there's a structural issue with how most E2E tests are written that makes flakiness almost inevitable.

Selector-based tests are brittle by design. You're telling the test to find #checkout-btn or .submit-form > button:first-child. These selectors are coupled to implementation details that change constantly. A developer renames a CSS class and 15 tests break. A component gets refactored and the DOM structure changes. A designer moves a button and the XPath stops matching.

Self-healing test tools address part of this by trying multiple locator strategies when the primary one fails. That helps with the selector rename problem. But it doesn't help with timing, state isolation, or environmental issues.

The deeper fix is tests that aren't tied to selectors at all.

How intent-based testing changes the flakiness equation

When you describe a test as "go to the checkout page, fill in the payment form, and verify the order confirmation appears," there's nothing in that description that can become stale. No CSS selector to break. No XPath to invalidate. No element ID to rename.

An AI agent executing that test interacts with the page the way a human would. It finds the payment form by understanding what a payment form looks like, not by matching a selector. If the form loads slowly, the agent waits because it can see the page isn't ready. If a modal pops up unexpectedly, the agent handles it because it can read what's on screen.

This doesn't eliminate all flakiness. Network failures still happen. Applications still throw errors. But it eliminates the entire category of flakiness that comes from brittle test code, which is the majority of the problem.

The numbers back this up. Teams using intent-based testing report maintenance near zero for UI changes, compared to 5-10 hours per week for a 100-test selector-based suite. That maintenance gap is almost entirely flaky tests being fixed over and over.

A practical flaky test action plan

If your team is drowning in flaky tests right now, here's the order of operations:

This week: Replace every sleep() in your test suite with an explicit wait condition. Track how many you find. It'll be more than you expect.

Next two weeks: Set up a flakiness dashboard. Even a spreadsheet works. Track which tests fail and re-pass without code changes. Identify your top 10 worst offenders.

Month one: Quarantine the top 10. Assign each one to a specific engineer with a two-week fix deadline. Most will be timing issues or shared state problems that take an hour to fix once someone actually looks at them.

Month two: Evaluate whether your testing approach is structurally sound. If you're spending more than 5 hours per week fixing tests that aren't catching real bugs, the framework isn't the problem. The approach is. Consider whether AI-powered testing could replace the most maintenance-heavy parts of your suite.

Ongoing: Every flaky test that gets fixed gets a root cause tag (timing, state, environment, selector). After a few months, you'll see which category dominates and can address it systematically. For most teams, it's timing. For most teams, explicit waits fix it.

The cost of doing nothing

Let's put a number on it. If your 50-person team spends 2% of engineering time on flaky tests (the Google average), that's one full-time engineer equivalent spent re-running pipelines, investigating false failures, and patching brittle selectors. At $150,000 fully loaded, you're burning $150K per year on tests that aren't catching bugs.

That's the optimistic number. Teams with older, larger suites report 5-8% of time spent on flakiness. At that level, you're losing two to four engineers' worth of productivity to a problem that has known solutions.

Fix the flaky tests, or replace the approach that keeps creating them. Either way, "just re-run it" shouldn't be part of your team's vocabulary.

The best testing setup is one where a red build means something actually broke. Where your team trusts the pipeline enough to stop what they're doing and investigate. That's the goal. Everything else is just noise.

Tired of babysitting flaky tests? Try Test-Lab and run E2E tests that don't break when your UI changes. Plain English test plans, zero selectors, zero flakiness from brittle code. Set up scheduled runs and forget about test maintenance.