Your QA team is good at their job. They catch usability issues that no script would flag, they think of edge cases developers miss, and they understand the product deeply enough to know when something feels off.

They're also stuck running the same 250 regression tests every release cycle. Each cycle takes roughly 33 hours of focused clicking, typing, and checking. Your application has 12 releases per month. That's 12 regression cycles. Each one takes a week of manual effort for one person.

That's your best QA talent spending most of their time on repetitive verification instead of the exploratory, creative work that actually needs a human brain.

This post is about what testing actually costs when you break down the numbers by approach. Not to argue that manual testing is bad. It's to show where different approaches make sense, so your team spends their time where it matters most.

The three approaches

There are three ways to test software in 2026. Each has a real cost, a hidden cost, and a ceiling.

Manual testing means a human clicks through your app, follows test scripts, checks results, and files bugs. It's the default, and it's irreplaceable for certain types of work. But for repetitive regression, it's the most expensive approach per test and the hardest to scale.

Traditional automation (Selenium, Cypress, Playwright) means engineers write scripts that drive a browser programmatically. It's fast to execute but slow to build and expensive to maintain. The hidden cost is the maintenance burden that grows with your codebase.

AI-powered testing means you describe what to test in plain language and an AI agent executes it in a real browser. It's the newest approach, the fastest to set up, and the cheapest to maintain. The tradeoff is execution speed per test and maturity at enterprise scale.

Let's put real numbers on each one.

Manual testing: the true cost

Start with the obvious number. A QA engineer in the US averages $101,000 per year (Glassdoor, 2026). In Eastern Europe, $30,000 to $50,000. Offshore in India, $13,000 to $30,000. Those salary numbers look manageable until you calculate what they buy you.

A manual tester can execute 200 to 300 test cases per regression cycle. Each test case takes 10 to 15 minutes of focused work. A smoke suite of 20 tests burns 45 to 60 minutes. A full regression of 800 tests takes 200 hours. That's five weeks of one person's time for a single pass.

Here's the uncomfortable part: repetitive regression testing catches about 30% of defects. Not because testers are bad at their jobs. Because nobody can maintain full precision when checking 300 things in a row. By test case 250, attention naturally drops. This isn't a human failing. It's a human reality. Regression testing is exactly the kind of work that shouldn't require a human brain in the first place.

The cost per test case works out to $10 to $25 for manual execution, accounting for salary, overhead, and the fact that a tester's day includes meetings, documentation, and communication alongside the actual testing.

Hidden costs that don't show up on the spreadsheet:

The biggest one is speed. Manual testing creates a bottleneck between "code ready" and "code shipped." When your regression cycle takes a week, you either wait a week to ship or you ship without full regression. Neither option is good.

Then there's inconsistency. A tester runs the same checkout flow differently on Tuesday than they did on Monday. Different click timing, different data entered, different things they notice or miss. You're not comparing apples to apples across runs.

And coverage gaps. Manual testers cover the flows they're told to cover. Exploratory testing adds value, but it's inconsistent and hard to scale. The edge cases that break in production are usually the ones nobody thought to test manually.

Where manual testing still wins: usability issues, visual polish, "does this feel right" judgment calls. A Forrester study found that targeted manual analysis catches about 30% more usability and contextual issues than automation alone. Humans are better at noticing when something looks wrong even if it technically works.

Traditional automation: the setup trap

Traditional automation flips the cost profile. Execution is nearly free. Creation is expensive. Maintenance is the killer.

A decent automation engineer costs $110,000 to $140,000 per year. They can write 3 to 5 solid E2E tests per day when starting from scratch, fewer as the suite gets complex. Setting up the framework, CI integration, and test infrastructure takes 2 to 4 weeks before the first useful test runs.

The execution speed advantage is dramatic. That 20-test smoke suite that took 45 to 60 minutes manually? Automated, it runs in 3 to 5 minutes. A 1,000-test regression that took 167 hours manually finishes in minutes on a parallel grid. This is the stat that sells automation to management.

But then reality sets in.

70 to 80% of automation effort goes to maintenance, not creation. Your team writes tests in month one. In month two, half of them break because the UI changed. In month three, the engineers who should be writing new tests are fixing old ones instead. By month six, the test suite is a maintenance project that happens to occasionally catch bugs.

Flaky tests make it worse. Tests that pass and fail randomly erode trust in the pipeline. Teams start ignoring failures, which defeats the purpose of having tests. The average cost of flaky test maintenance is roughly $2,250 per month in developer time.

The real cost breakdown for a mid-size team:

| Cost factor | Monthly | Annual |

|---|---|---|

| QA engineers (2 FTE) | $15,000-20,000 | $180,000-240,000 |

| Tools and infrastructure | $500-2,500 | $6,000-30,000 |

| Maintenance time (60-80% of effort) | Included in FTE | Included in FTE |

| Total | $15,500-22,500 | $186,000-270,000 |

Out of that $180K+ in engineering cost, roughly $130K to $190K goes to keeping existing tests alive rather than writing new ones or building features. That's the maintenance tax.

Payback period: Traditional automation typically breaks even in 7 to 14 months. Teams with frequent releases (weekly or daily) see faster payback because each automated run replaces a manual cycle. Teams with monthly releases might wait over a year before the investment pays off. The 300 to 500% ROI that vendors cite is real, but it's a 12 to 18 month timeline, and it assumes your team actually maintains the suite well enough to keep it useful.

AI-powered testing: the new math

AI testing changes the cost structure fundamentally. Let's break it down the same way.

Creation cost: near zero. You don't write test scripts. You describe what to test in a few sentences of plain English. "Go to the checkout page, add the Pro plan, complete payment with test credentials, verify the confirmation page shows the correct amount." That takes two minutes, not two hours.

A team of three engineers who'd spend a month building a 50-test Playwright suite can have the equivalent coverage in an afternoon. Not because AI testing is magic, but because it removes the translation step between "what we want to test" and "code that tests it."

Maintenance cost: close to zero. This is where the math gets interesting. Traditional automation spends 70 to 80% of effort on maintenance. AI-powered testing drops that to 5 to 15%. When there are no selectors to break, selector changes can't break your tests. When the agent navigates by understanding the UI rather than matching DOM elements, a redesign doesn't destroy your test suite.

Industry data backs this up. Teams switching to AI-native testing platforms report 70 to 85% reduction in maintenance effort. ACCELQ reported 72% maintenance reduction with a 53% cost reduction. Our numbers at Test-Lab are even more aggressive because the agent-based approach means there's nothing to "maintain" in the traditional sense.

Platform cost: fraction of traditional tools. Test-Lab starts at $0 per month with pay-as-you-go credits. The BYOK Pro plan is $29 per month. Compare that to Testim at $450+ per month or Mabl at $499+ per month. Even enterprise AI testing platforms come in at a fraction of what a traditional automation setup costs in total.

The same mid-size team comparison:

| Cost factor | Monthly | Annual |

|---|---|---|

| Engineering time (test creation + review) | $1,000-3,000 | $12,000-36,000 |

| Platform cost | $0-200 | $0-2,400 |

| Maintenance time | Near zero | Near zero |

| Total | $1,000-3,200 | $12,000-38,400 |

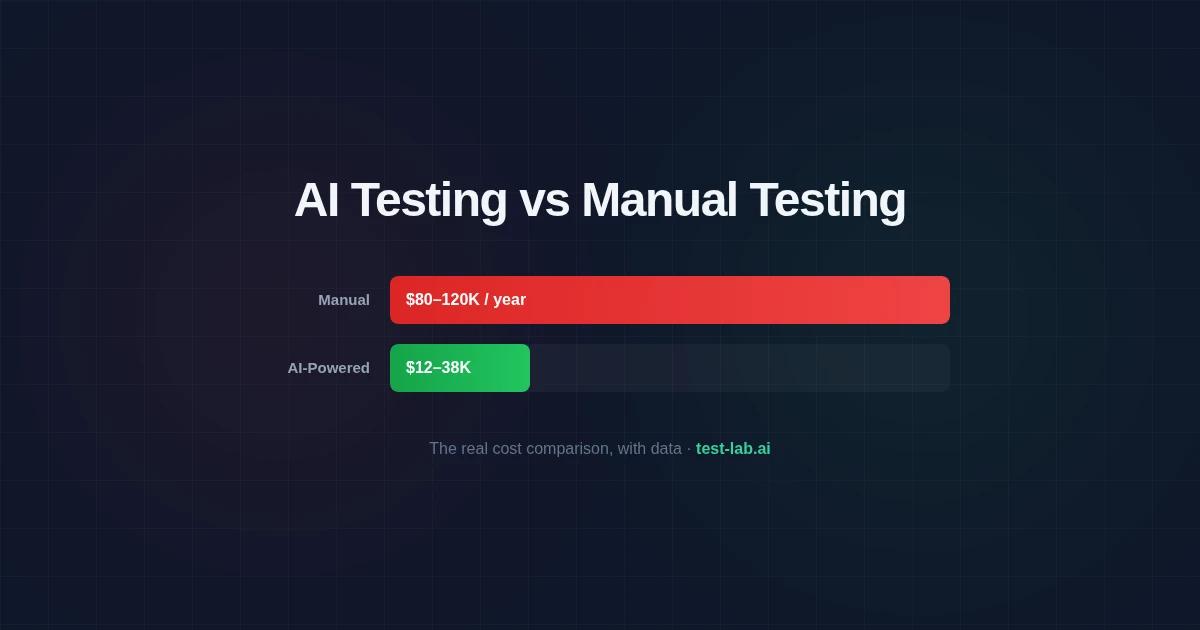

That's roughly 85% cheaper than traditional automation and 90%+ cheaper than manual testing for equivalent coverage.

Payback period: 3 to 6 months, compared to 12 to 18 for traditional automation. Faster because there's almost no upfront investment. You're paying for test runs, not building infrastructure.

Side-by-side: the full picture

| Manual | Traditional automation | AI-powered | |

|---|---|---|---|

| Setup time | None | 2-4 weeks | Minutes |

| Cost per test case (creation) | $10-25 per execution | $50-150 to write | $0 (describe in English) |

| Execution speed (20 tests) | 45-60 minutes | 3-5 minutes | 5-15 minutes |

| Maintenance burden | None (re-execute each time) | 70-80% of effort | 5-15% of effort |

| Bug detection rate | ~30% | ~55% | 90-95% |

| Scalability | Linear cost increase | Fixed infra + linear maintenance | Sub-linear (self-healing) |

| Payback period | N/A | 7-14 months | 3-6 months |

| Annual cost (50-test suite) | $80,000-120,000 | $186,000-270,000 | $12,000-38,400 |

| Best for | Usability, exploratory | Data-heavy, high-frequency | Most E2E testing |

A few things jump out from this table.

Traditional automation is actually more expensive than manual testing when you account for the engineering talent required to build and maintain it. The value isn't cost savings on a per-test basis. It's speed and consistency. Automated tests run in minutes instead of days, and they run the same way every time.

AI-powered testing is cheaper on every axis except execution speed. A manual tester clicks through a flow in 10 minutes. An automated script does it in 30 seconds. An AI agent takes 2 to 5 minutes. For most teams, the difference between 30 seconds and 3 minutes doesn't matter. The difference between $270K and $38K per year absolutely does.

What the data says about detection rates

Cost matters, but only if the approach actually catches bugs.

Manual testing has a defect detection rate of about 30%. That's not a knock on testers. It's a reflection of human attention limits over hundreds of test cases. Code inspections detect about 60% of defects, but those are a different activity.

Traditional automation detects roughly 55% of bugs. Higher than manual because automated tests execute identically every time and don't get tired. Lower than you'd expect because automated tests only check what they're told to check. If nobody wrote an assertion for a specific failure mode, the automation won't catch it.

AI-powered testing pushes detection rates to 90 to 95% for the repetitive regression work. The AI agent doesn't just check predefined assertions. It reads the page, notices unexpected states, catches visual anomalies, and flags behaviors that look wrong even if nobody specifically told it to look for them. It handles the exhaustive, repetitive checking so your QA team can focus on the exploratory testing and usability judgment that actually needs human intelligence.

The hybrid approach most teams actually need

Here's the honest answer: you probably need more than one approach.

AI-powered testing should handle the bulk of your E2E coverage. Signup flows, checkout flows, dashboards, CRUD operations, cross-browser checks. These are the high-volume, high-value tests that need to run on every deploy. Set them up once, schedule them, and let them run.

Manual testing should cover what AI can't: usability reviews, accessibility spot-checks, "does this feel right" judgment calls, and exploratory testing of new features where the expected behavior isn't fully defined yet. Budget 10 to 20% of testing effort for manual work, focused on the areas where human judgment adds the most value.

Traditional automation still has a place for data-heavy testing. If you need to run the same form submission with 500 different input combinations, a parameterized Playwright test is more efficient than 500 AI agent runs. Same for performance testing, load testing, and deep API validation.

The split that works for most teams: 70% AI-powered, 20% manual, 10% traditional automation. The exact ratio depends on your product, team size, and what you're testing.

Getting your QA team's time back

If your QA engineers are spending most of their week on regression, here's how to shift that balance:

Week one: Pick your five most critical user flows. Describe each one in plain English. Run them through an AI testing platform. Compare the results to your existing coverage. You can run your first test on Test-Lab in under two minutes.

Month one: Hand your regression suite to AI-powered testing. Your QA team now has their time back for the work that actually needs their expertise: exploratory testing, usability reviews, testing new features where the expected behavior isn't fully nailed down yet.

Month three: Evaluate the ROI. If you're like most teams, you'll have cut testing costs by 50 to 80% while catching more bugs than before. Your QA engineers are doing more impactful work because they're not grinding through the same 250 test cases every cycle. The automation engineers are building features instead of fixing selectors.

Repetitive regression testing is a bad use of human talent. The goal isn't fewer QA engineers. It's QA engineers doing work that's worth their skill set.

See the cost difference for yourself. Try Test-Lab and run your first AI-powered E2E test in under two minutes. Then compare: time to create, time to maintain, bugs caught. Read more: Best AI test automation tools compared · No-code test automation guide · How to fix flaky tests