You've narrowed it down to three platforms. You've sat through the demos, skimmed the feature matrices, and read the marketing pages where every tool claims to be AI-native, self-healing, and easy to set up.

None of that helps you pick one.

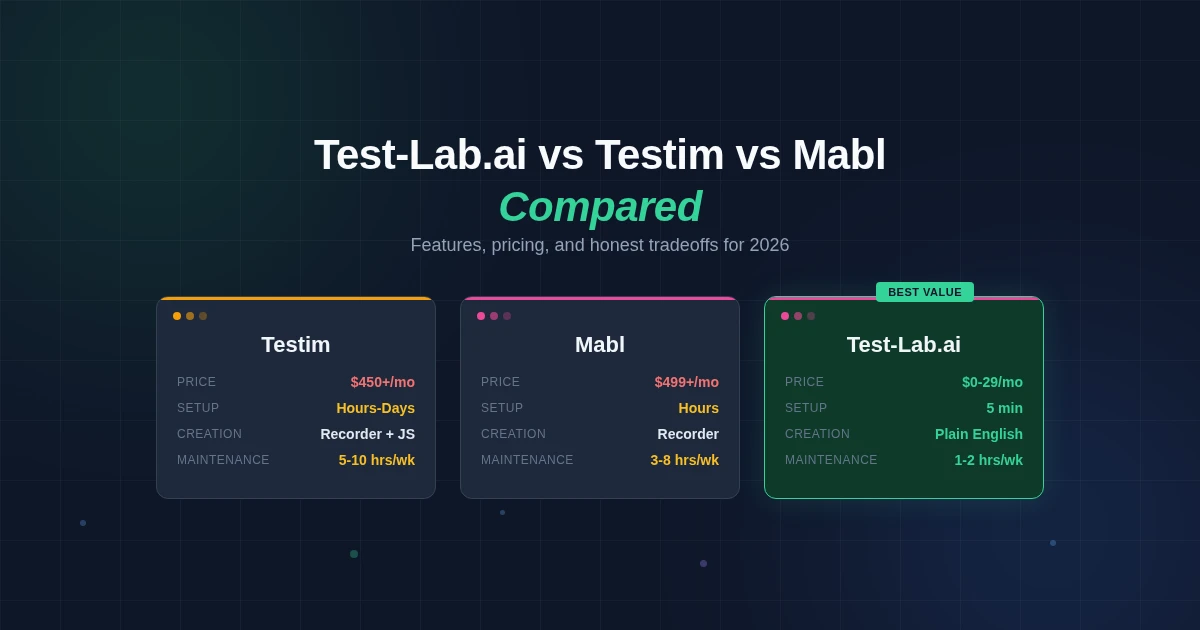

We're going to compare Test-Lab.ai, Testim, and Mabl by what actually matters: how you create tests, what happens when your UI changes, how fast you get value, and what it costs. We built Test-Lab, so we're biased. We'll be upfront about where the other tools are stronger.

The fundamental difference

These three platforms represent different generations of thinking about test automation.

Testim started as a record-and-replay tool with AI-powered smart locators. You record browser interactions. AI makes the selectors more resilient. When the UI changes, the smart locators try to find the right element anyway. Works well when the changes are small. Struggles when they're not.

Mabl took the recorder approach further by adding native API testing, visual regression, and a tighter CI/CD integration story. The auto-healing is solid. The unified platform means you're not stitching together multiple tools. But you're still fundamentally recording interactions and maintaining those recordings.

Test-Lab.ai skips recording entirely. You describe what to test in plain English. An autonomous AI agent opens a real browser and executes the test like a human would. No selectors. No recordings. No syntax to learn. The agent understands the UI, so when the UI changes, the test keeps working without any healing logic at all.

The difference isn't incremental. It's architectural. Testim and Mabl add AI to traditional test automation. Test-Lab replaces the traditional approach with AI as the execution engine.

Test creation

This is where you'll feel the difference on day one.

Testim

You open the Testim recorder, navigate to your app, and click through the flow you want to test. The recorder captures each step. You review the recorded steps, add assertions, and configure test data. For straightforward flows, this takes 30 minutes to an hour per test.

The friction shows up with complex flows. SPAs with dynamic content, multi-step wizards, authentication redirects, modals inside modals. The recorder misses things. You end up editing JavaScript snippets to handle the gaps. Testim's Copilot feature helps by generating test steps from natural language prompts, but it's an addition to the recorder workflow, not a replacement for it.

Mabl

Similar to Testim. You use a visual recorder called the Trainer to capture interactions. Mabl's Trainer is well-designed and handles more edge cases out of the box than most recorders. Adding assertions and variables feels natural. API test steps can be mixed into browser flows, which is a real advantage over Testim.

Same limitations apply, though. Complex dynamic content requires manual intervention. When the recorder doesn't capture a flow correctly, you're debugging recorded steps rather than writing fresh logic. The learning curve is real. Expect a few days before your team is comfortable creating tests independently.

Test-Lab.ai

You write a sentence: "Go to staging.myapp.com, log in with test@example.com, navigate to the billing page, upgrade to the Pro plan, and verify the confirmation screen shows the correct price."

That's the test. The AI agent handles navigation, waits for loading states, interacts with form fields, clicks buttons, and captures evidence at every step. No recording session. No step-by-step configuration. If you can describe the flow in English, you can test it.

The tradeoff is execution time. A recorded test in Testim or Mabl might execute in 30 to 60 seconds. The same flow in Test-Lab takes 2 to 5 minutes in quick mode because the agent reads and understands each page rather than jumping to known selectors. For most teams, the creation speed advantage (minutes vs. hours) more than compensates.

Self-healing and maintenance

This is where testing tools earn or lose their keep. 60-80% of test automation effort goes to maintenance, not creation. The best test creation experience in the world doesn't matter if every UI change breaks half your suite.

Testim

Testim's smart locators use multiple attributes (CSS selectors, XPath, text content, visual position) to identify elements. When one attribute changes, the locator falls back to others. This handles minor changes well. A button that moves from the left sidebar to a top nav usually still gets found.

It breaks down on larger redesigns. If a page layout changes significantly, or if elements get restructured inside new components, the smart locators fail. You manually update the affected steps. For a 100-test suite on a fast-moving product, expect 5 to 10 hours per week on maintenance.

Mabl

Mabl's auto-healing is comparable to Testim's. Multiple locator strategies, automatic fallback, and a reliability score that warns you when a locator is getting fragile. Mabl also adds visual change detection, which catches layout shifts that functional tests might miss.

Maintenance burden is similar to Testim. Maybe slightly lower because the visual regression layer catches issues earlier, before they cascade into multiple test failures. Budget 3 to 8 hours per week for a 100-test suite.

Test-Lab.ai

There are no selectors to heal because there are no selectors. The AI agent finds elements by understanding what they are, not where they sit in the DOM. A "Submit" button is a "Submit" button whether it's a <button>, an <a> tag styled as a button, or a <div> with an onClick handler. Self-healing isn't a feature we built. It's a consequence of the architecture.

Maintenance is near zero for UI changes. You only update a test when the business logic changes. If the checkout flow adds a new step, you add a sentence to your test description. If the button text changes from "Buy Now" to "Complete Purchase," the agent adapts automatically. For a 100-test suite, expect 1 to 2 hours per week, mostly reviewing results and updating tests for intentional flow changes.

CI/CD integration

How fast you can plug testing into your deployment pipeline determines whether tests actually run or just exist on paper.

Testim

Testim integrates with most major CI/CD systems through a CLI tool and REST API. Configuration means installing the Testim CLI, configuring auth tokens, setting up test suite execution as a pipeline step, and mapping test environments to your deployment stages. Expect a few hours to get a basic pipeline running, longer if you need parallel execution across multiple browsers, custom reporting, or integration with Tricentis qTest for test management.

The CLI approach is flexible but requires someone comfortable with pipeline configuration. Documentation is solid for common CI systems but thin for edge cases. Once configured, execution is reliable. The challenge is getting there.

Mabl

Mabl's CI/CD integration is arguably its best feature. Native integrations exist for GitHub Actions, Jenkins, CircleCI, GitLab, Azure DevOps, Bitbucket Pipelines, and others. The setup is smoother than Testim's CLI approach because Mabl supports deployment events as first-class triggers. Push a deploy to staging, Mabl automatically runs the test plan associated with that environment.

Results flow back into your pipeline with pass/fail gates. The deployment-aware architecture means Mabl knows which version of your app it's testing, which makes debugging failures faster. Setup takes an hour or two for basic integration, half a day for full configuration with custom environments, branch-based testing, and deployment tracking. Worth the investment if CI/CD integration is your top priority.

Test-Lab.ai

One YAML step. In GitHub Actions, you add the Test-Lab action to your workflow file, pass your API key and test plan IDs, and you're done. The action runs your tests, waits for results, and fails the build if any test fails. Same deal for GitLab CI, CircleCI, or any system that can make an API call.

No environment mapping, no browser grid configuration, no test runner installation. We wrote about the full setup here. Five minutes from "I want tests in my pipeline" to tests actually running in your pipeline. The tradeoff is fewer knobs to turn. If you need granular control over execution environments, browser versions, and parallel distribution, the enterprise tools give you more options.

Pricing

This is where it gets interesting.

| Testim | Mabl | Test-Lab.ai | |

|---|---|---|---|

| Entry price | ~$450/month (sales call required) | $499/month (Starter) | $0/month (pay-as-you-go) |

| Mid-tier | Custom quote | $1,199/month (Professional) | $29/month (BYOK Pro) |

| Enterprise | Custom quote | Custom quote | Custom quote |

| Free trial | Limited free plan | 14-day trial | $3 free credits, no card required |

| Pricing model | Subscription (seats + features) | Subscription (features + execution) | Pay-per-test or subscription |

For a team running 50 E2E tests weekly, the annual cost difference looks roughly like this:

Testim and Mabl will run you $6,000 to $15,000 per year in platform costs alone. Add the maintenance engineering hours (at $75/hour average), and total cost of ownership for a 50-test suite lands at $20,000 to $50,000 annually.

Test-Lab on pay-as-you-go costs a few dollars per test run. For 50 weekly tests, that's roughly $1,500 to $5,000 per year including credits, with minimal maintenance overhead. The $29/month BYOK Pro plan drops per-test costs further if you bring your own API keys.

The gap isn't just pricing philosophy. It reflects the maintenance difference. When you're not spending engineering hours fixing broken selectors, total cost drops fast.

Where Testim and Mabl are stronger

We said we'd be honest. Here's where the other tools have real advantages.

Execution speed. Testim and Mabl execute tests in 30 to 90 seconds because they jump directly to known elements. Test-Lab's AI agent takes 2 to 5 minutes per test. If you run 500 tests on every commit and need results in under 10 minutes, selector-based tools with heavy parallelization are faster. Test plan analysis helps prioritize which tests run when, but the per-test speed gap is real.

Enterprise maturity. Testim (backed by Tricentis, which received a $1.33 billion strategic investment in 2025) and Mabl have years of enterprise deployments behind them. Role-based access control, audit logging, compliance certifications, dedicated support teams. All mature. Test-Lab offers these features but has less track record at Fortune 500 scale.

API testing. Mabl includes native API testing alongside browser tests. You can chain API calls and browser interactions in a single flow. Test-Lab is browser-focused. API validation happens through the browser (checking that the right data appears on screen), not by calling endpoints directly.

Visual regression. Mabl includes pixel-level visual comparison as a core feature. Test-Lab's agent captures screenshots at every step and you can compare them across runs, but there's no automated pixel-diff analysis the way Mabl does it.

Data-driven testing at scale. If you need to run the same flow with 500 input combinations, Testim and Mabl handle parameterized tests natively. Test-Lab supports variable substitution, but managing large data matrices isn't as polished as in the enterprise tools.

Where Test-Lab.ai wins

Time to first test. Minutes, not hours or days. Describe a flow, run it, get results. No recorder training, no platform learning curve, no sales call.

Maintenance cost. Near zero for UI changes. The architectural advantage of agent-based testing means you're not maintaining selectors, locators, or recorded steps. You maintain test descriptions, which only change when business logic changes.

Accessibility. Anyone who can describe a user flow can write a test. Product managers, founders, customer support leads. No QA background required. No low-code syntax to learn.

Total cost of ownership. Low platform cost plus low maintenance cost makes Test-Lab dramatically cheaper for teams running fewer than 200 tests. The math gets closer for very large suites where per-test costs add up.

Setup overhead. One YAML step for CI/CD. No infrastructure to configure. No browser grids to manage. No test environments to provision.

How to choose

Choose Testim if you're an enterprise team already invested in the Tricentis ecosystem. The integration with other Tricentis tools (qTest, NeoLoad, LiveCompare) creates real value if you're using the full suite. You have a dedicated QA team comfortable with low-code tooling, and budget isn't the primary constraint.

Choose Mabl if you're a DevOps-oriented team that values unified testing (browser + API + visual) in one platform. Mabl's deployment-triggered testing and CI/CD integration depth are best-in-class among recorder-based tools. You want a proven platform with a track record of enterprise reliability.

Choose Test-Lab.ai if you want testing without the overhead. You're a developer, founder, or small-to-mid team that needs solid E2E testing but doesn't have the bandwidth to learn, configure, and maintain a traditional automation platform. You value fast setup, low maintenance, and honest pricing over enterprise feature depth. See how we compare in detail.

Real-world decision framework

Feature comparisons only get you so far. Here are the questions that actually determine which platform fits.

How many tests do you need? Under 100, Test-Lab's per-test pricing and zero maintenance make it the cheapest option by a wide margin. Between 100 and 500, all three platforms are competitive depending on your maintenance costs. Above 500, Testim and Mabl's flat-rate enterprise pricing can work out cheaper if you have the QA staff to maintain the suites.

How fast does your UI change? If you ship daily with frequent UI updates, selector-based tools will generate constant maintenance work. The faster your UI changes, the more an agent-based approach saves. If your UI is stable and only changes monthly, the maintenance advantage shrinks and execution speed becomes more important.

Who will write and maintain tests? If you have dedicated QA engineers comfortable with low-code tools, Testim and Mabl give them deep control. If tests will be written by developers, product managers, or founders who have other priorities, Test-Lab's plain English approach removes the learning curve entirely.

What's your budget reality? If you can spend $500+/month on testing infrastructure and have QA staff to use it, Testim and Mabl are proven platforms. If every dollar matters and you need testing to just work without dedicated staff, Test-Lab's free tier and $29/month pro plan are in a different league.

Try the same test on all three

The best way to decide is to run your hardest test scenario on each platform. Not the login page. The flow that breaks. The one with the multi-step form, the dynamic content, the third-party embed.

You can run your first test on Test-Lab in under two minutes. No signup for the first five demo runs. See how the AI agent handles your application, not a polished demo. Then try the same scenario on Testim and Mabl's trials. Compare setup time, maintenance after a week, and what you get for the price.

The right tool is the one that actually gets used. The fanciest testing platform in the world delivers zero value if it sits unconfigured because setup took too long, or if test failures get ignored because the maintenance burden made the suite unreliable.

Pick the tool your team will actually run. Every day. On every deploy.

Read more: Best AI test automation tools compared · How self-healing tests cut maintenance by 95% · No-code test automation guide · Set up CI/CD integration in 5 minutes